Deprecated Features:

- N-VDS host Switch (Only DVS to be used)

- KVM Hypervisor (Only ESXi Hypervisor and Bare Metal)

- NSX Advanced Load Balancer Policy API and UI (Focus more on Advanced load Balancer out of NSX)

High Level Architecture of NSX

The architecture is not changing from the previous version of NSX-T 3.x for example, NSX still have Management, Control and Data Plane (provided by hypervisors, bare metal servers and NSX Edges).

Management and Control Planes

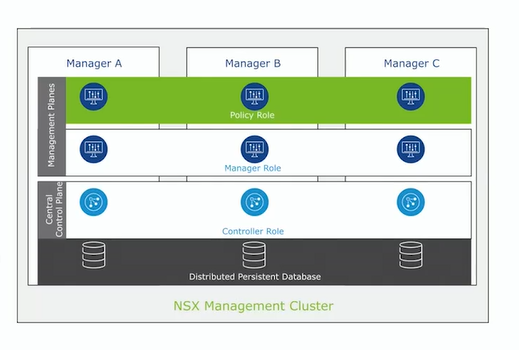

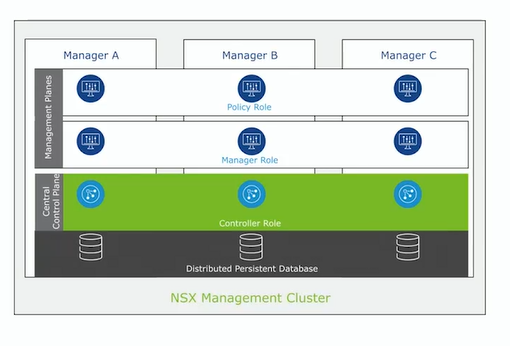

They are build inside the NSX management cluster, to be specific they are running inside the NSX Manager Nodes.

The management plane provides the UI interface and REST API for all the users to allow configuration to be submitted.

Control plane instead is managing the computing part, and its purpose is to distribute the network runtime state.

In a Production environment the NSX Management Cluster is formed by a group of three Virtual Machines called NSX Manager node, this is needed for high availability and scalability purposes.

As said before every node has inside management and control plane:

– manager and policy roles are part of the management plane

– controller role is part of the CCP or Central Control Plane

The state of the 3 NSX Managers appliances is replicated and distributed in a persistent database (CorfuDB).

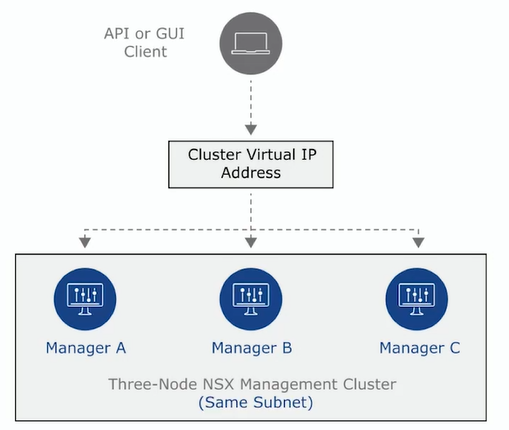

NSX Manager Cluster with VIP

Since we have 3 appliance having a single point of access should be a clever solution, NSX natively supporting the possibility to use a Virtual IP address for accessing the cluster, but it comes with some requirements/limitations:

- All manager in the same subnet

- One manager node is elected as leader

- VIP is attached only to the leader

- Traffic is not balanced across all the managers

- Single IP is used for API and UI access

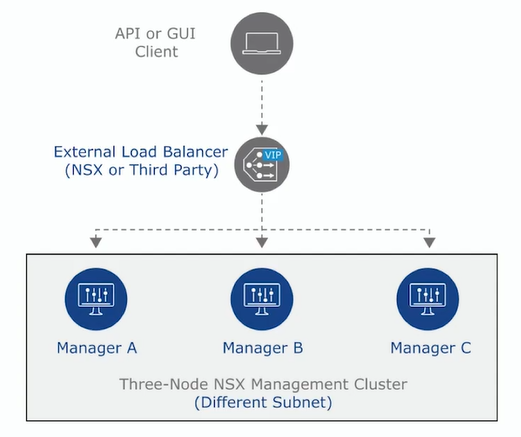

NSX Manager Cluster with Load Balancer

You can use an External Load Balancer to manage the access to the NSX Manager cluster and in this case you can have the following benefits:

- Managers can be in different subnets

- All nodes are active

- GUI and API are available on all Managers (supporting more API load for example)

- The traffic that is going through the VIP is balanced to all the managers

NSX policy Role

- Provides central location for configure network, security and services in the environment

- Allow users to use the UI for configure the environment

- It uses the desired state specified by the user, it configures the underlying systems without any issues with current state or checks additional implementation steps

NSX Manager Role

Responsible for taking the desired state from policy role and make it sure that everything is configured as it should be

- Install and prepare the data plane

- receive input from policy and validate the configuration

- collect data and statistics from the data plane and the data plane components

Policy and Manager flow

This roles of course are working together and when something is changed and must be saved, the data are saved on the distributed database that I mentioned before, this DB is called CorfuDB and exists in every NSX manager node.

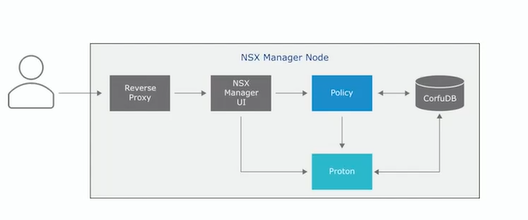

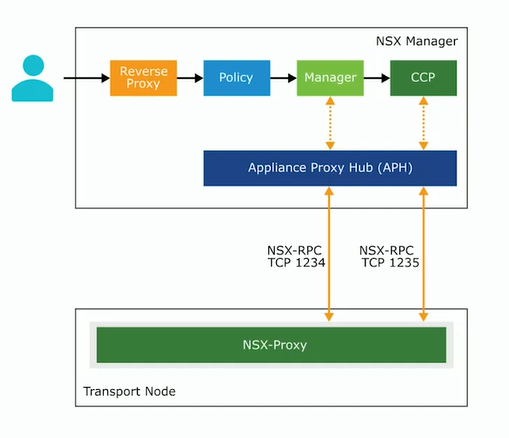

How the components Manager (the service is called Proton) and Policy are working togheter is explained on the picture below.

- The access point to NSX manager is the Reverse Proxy

- The policy role manage the network and security configuration, enforcing it to the manager role

- The Proton service receive the configuration managing different things like switching, routing and firewall

- CorfuDB stores data coming from Policy and Proton configuration

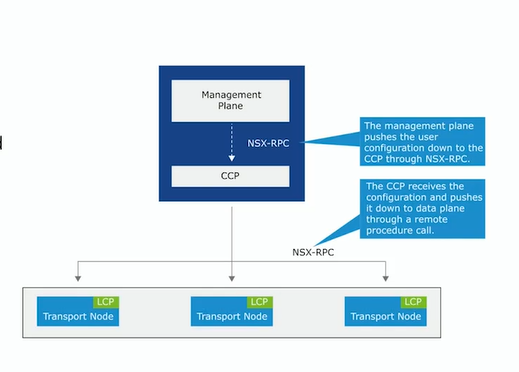

NSX Controller

The desired state is pushed to the CCP (Central Control Plane) that’s responsible to configure the data plane. Main function of NSX Controller:

- control plane functionality for switching, routing, firewalling

- computing runtime state accordingly to the management plane

- distributing the topology information collected from the data plane

- push stateless configurations to forwarding engines

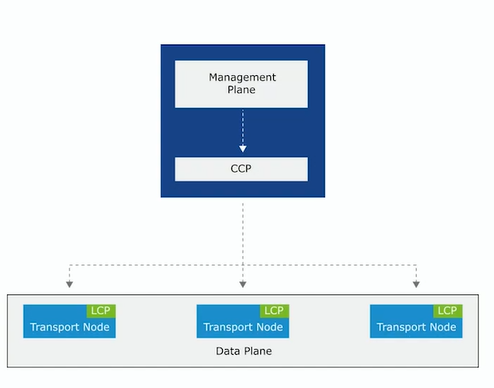

Control Plane

The Control plane is divide into CCP as mentioned before and LCP / Local Control Plane running on each transport node (esxi, bare metal, edge node).

CCP is computing the ephemeral runtime state based on the configuration received from the management plane. CCP disseminates information reported by the data plane nodes using the LCP

LCP monitor the local link status, compute the local ephemeral runtime state based on the upgrade received from the data plane itself and the CCP. It pushes stateless configuration to forwarding engines.

The CCP receives the configuration information from NSX manager and propagates the information to the LCP of the transport nodes.

The LCP on each transport node interacts with CCP, if a change occurs the LCP notifies its assigned CCP, which further propagates these changes to the other transport nodes.

Control Plane Sharding

Since the NSX management cluster include thee nodes, the CCP is using the sharding function to distribute the workloads and the relative assigned transport nodes to each manager/controller node.

Each node is assigned to a controller for L2 and L3 configuration and DFW rules distribution.

Each controller receive the configuration updates from management and data plane but mantains only the relevant information on the nodes that it is assigned.

When a controller fails the two remaining ones are recalculating and redistributing the load between each other, this is ensuring high-availability and no issue on the data plane traffic flow.

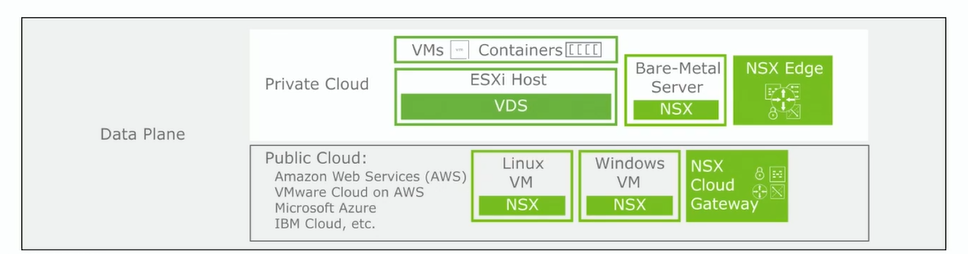

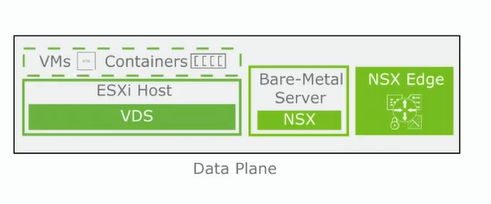

Data Plane

The data plane is composed by different components and functions>

- multiple endpoints (esxi, bare-metal servers, NSX edge nodes)

- it contains different type of workloads: virtual machines, containers and application running on bare-metal servers

- forwards the data plane traffic

- apply logical switching, distributed and centralized routing and firewall filtering

The data plane purpose is to forward the packets based on the configuration pushed by the control plane reporting topology information.

- The data plan maintains the status of the links/tunnels and manage the failover

- stateless forwarding based on the tables and rules pushed by control plane

- packet level statistics

Data Plane supporting different types of transport nodes:

- ESXi Hypervisor

- Bare-Metal: Linux and Windows bare metal

- NSX Edge: VM or bare-metal form factor

Data Plane Communication

The Appliance Proxy Hub (APH) acts as communication channel between NSX Manager and the transport nodes. It runs as a service on the NSX Manager, provide secure connectivity using NSX-RPC and it uses two specific communication ports TCP 1234 / TCP 1235.

Another really important port is the 443 between NSX Managers and vCenter/ESXI nodes.

Of course I’m not mentioning the infrastructure ports for services like SSH, DNS, Syslog and NTP for example, anyway you can find the complete list of required ports here: https://ports.esp.vmware.com/home/NSX

Official Documentation for much more detailed information

This is part of a series of blogpost related to NSX:

– Part 2 NSX Infrastructure Preparation

– Part 3 Logical Switching in NSX (Coming Soon)

– Part 4 Logical Routing in NSX (tbd)

– Part 5 Logical Bridging in NSX (tbd)

– Part 6 Firewall in NSX (tbd)

– Part 7 Advanced Thread Prevention (tbd)

– Part 8 Services in NSX (tbd)

– Part 9 NSX Users and Roles (tbd)

– Part 10 NSX Federation (tbd)